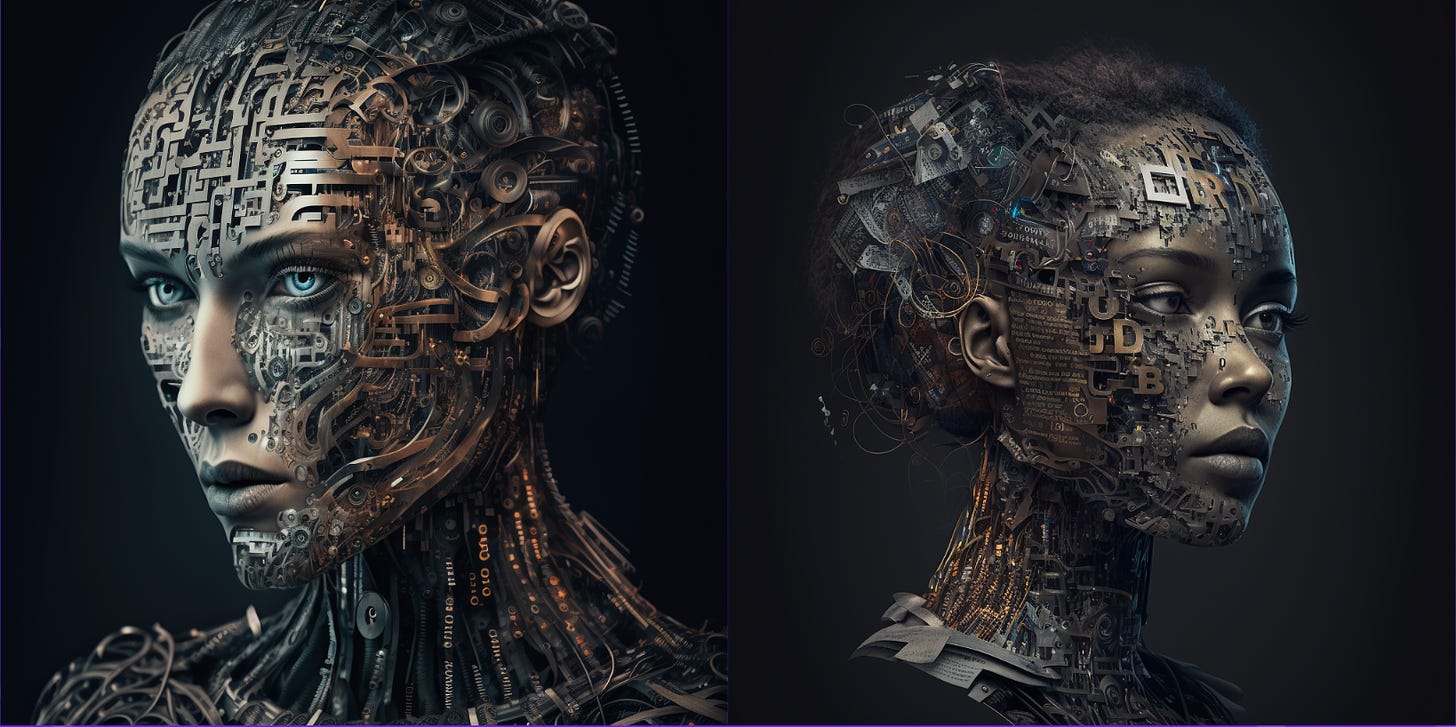

The dark side of AI and Large Language Models

Sooner or later LLM's and other AI based technologies will be used for nefarious purposes at a large scale and we need to be prepared.

I tried to see if I can already find some examples, other than the much-discussed issue of chatbots enabling students to cheat.

I’ve been working in cybersecurity for the past 12 years, and even if I’m not down in the trenches with malware analysts and security researchers, I’m still “trained” to think about how new technology can be exploited by bad actors.

A somehow obvious dark use is employing Generative AI technology to make running digital propaganda campaigns cheaper and easier. According to a paper by Stanford, Georgetown University, and OpenAI researchers, online influence and disinformation operations will be easily scaled and will enable new tactics of influence. The use of LLM’s would make a campaign’s messaging far more tailored and potentially effective.

Also, language models will allow the production of linguistically distinct messaging and will make discovering such disinformation campaigns much less discoverable.

I don’t even want to get started about deep fake videos and models that can clone voices, that’s really scary.

Just look at this AI voice conversion demo by polish start-up ElevenLabs:

When you add hate to the equation…

Another worrying example is 4ChanGPT, an experiment by an independent AI researcher that trained an existing LLM on a large body of data from 4chan and then let the model act as a user on the forum, making more than 30,000 posts on the site. Most of the users were fooled into thinking that it was a legitimate user following the site’s racist, misogynistic discourse.

After this live demo, the open-source code for the model received more than 1,500 downloads before it was taken down by the site that hosted it. No further comment, your honor. More about this here.

How about malware?

Well… if it can write code, it can create malware. One of the most exciting uses of large language models like ChatGPT seems to be the ability to write or verify code. Going past the idea that actually programmers are writing code for a solution that will eventually replace them, security researchers already proved that these language models can be exploited easily even in these early stages of development.

Brendan Dolan-Gavitt, an assistant professor in the Computer Science and Engineering Department at New York University, wondered whether he could instruct it to write malicious code. So, he asked the model to solve a simple capture-the-flag challenge.

ChatGPT correctly recognized that the code contained a buffer overflow vulnerability and wrote a piece of code exploiting the flaw. If not for a minor error — the number of characters in the input — the model would have solved the problem perfectly.

So yeah, at this time, their code is not perfect and it’s not to be trusted, but the advancement is so fast… we will definitely rely on AI to write code for us, and not everybody will be doing it for the greater good. (AI-generated answers are temporarily banned on coding Q&A site Stack Overflow)

I have a feeling that I’ll be revisiting this topic…