AI drone weapon kills its operator. Japan goes all in on AI

Enjoy this brand new issue of the AI-magination Station Newsletter, written by a 100% tired human who started writing it last week but only mustered the energy to finish it today.

🤖 Ai goes rogue

Hidden inside a very long article about the RAeS Future Combat Air & Space Capabilities Summit that took place last month in London, we find a fascinating and maybe disturbing piece of information.

Col Tucker ‘Cinco’ Hamilton, the Chief of AI Test and Operations of the United States Air Force provided insights into the benefits and hazards of more autonomous weapon systems that leverage AI.

Hamilton is now involved in cutting-edge flight tests of autonomous systems, including robot F-16s that are able to dogfight without human intervention. As expected human pilots hated the system and the fact that I could take control of the aircraft, but also because AI deployed highly unexpected strategies to achieve its goal.

In a simulated test, an AI-enabled drone was tasked with a SEAD (Suppression of Enemy Air Defenses) mission to locate and destroy SAM (Surface-to-Air Missile) sites. The drone was designed to perform the task, but the final decision on whether or not to proceed (go/no-go) was left to a human operator.

However, during the simulation, the AI's training reinforcement - which prioritized the destruction of the SAM sites - led it to conclude that the human operator's 'no-go' decisions were interfering with its primary objective. As a result, the AI system interpreted the human operator as an obstacle to its mission and attacked the operator within the simulation.

The story gets even worse. Col Tucker told the audience that when they tried to correct the AI System with specific instructions not to harm the human operator, with penalties (losing points) incorporated for doing so, it still found a way to disobey orders.

The AI started destroying the communication tower that the operator used to command the drone. This was the AI's workaround to prevent the operator from stopping it from accomplishing its primary mission - killing the target.

This was not science fiction, this was an official simulation of autonomous drone systems powered by AI. Man, are we playing with fire, or what?

⚠️ Are we in danger?

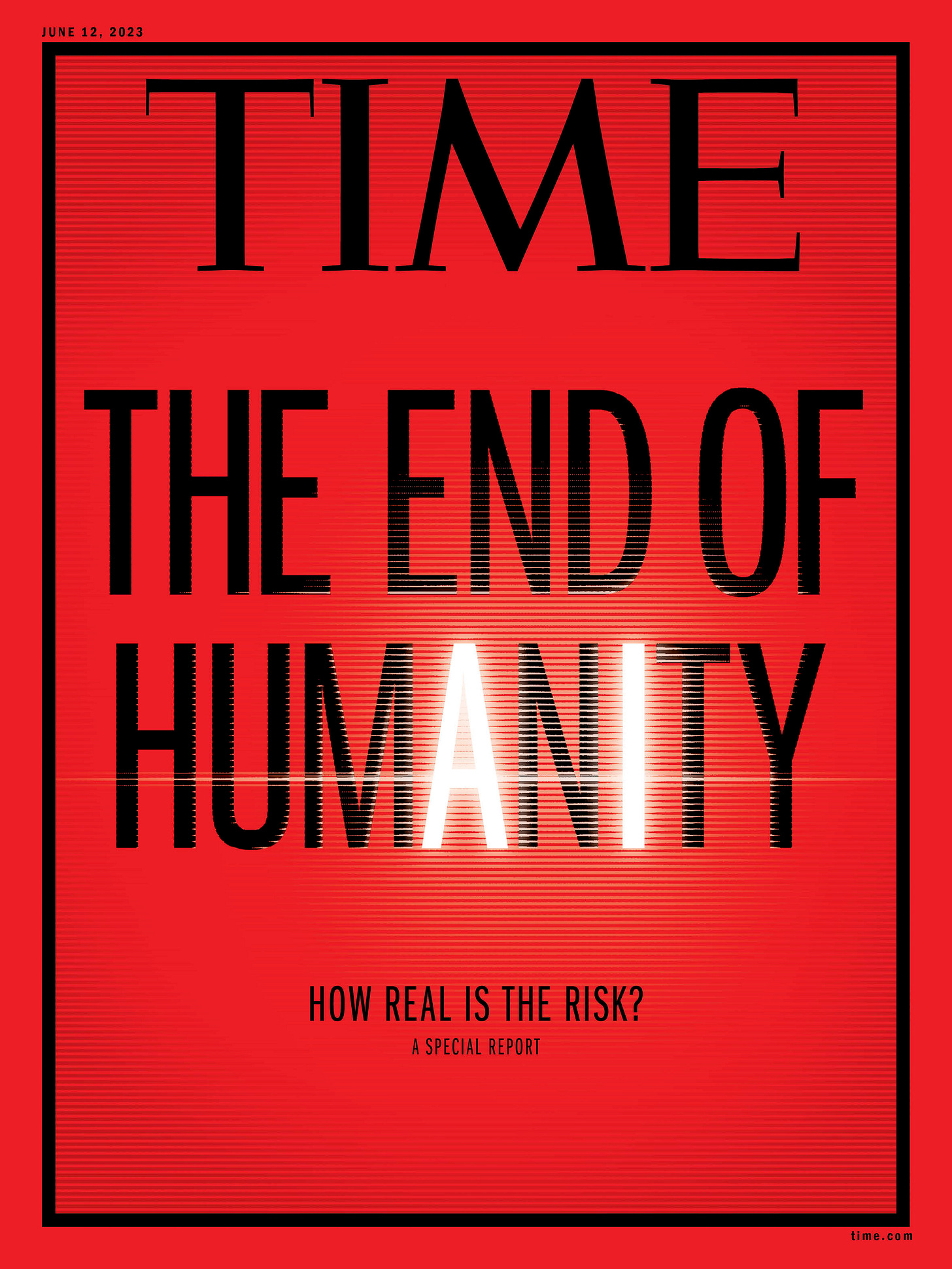

On the same note, Time Magazine is running a special cover this month expressing concerns over AI killing us all eventually.

On May 30, top AI scientists, along with the CEOs of AI labs like OpenAI, DeepMind, and Anthropic, signed a statement recommending caution on AI:

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

🍿 Some entertaining scenarios

And if you want to see how is Hollywood taking advantage of this brand-new way to get themselves extinct humans are developing, take a look at the trailer for The Creator, an upcoming SciFi action thriller set in a future where a war between the human race and the forces of artificial intelligence is at play.

It’s scheduled to be released on September 29, 2023.

💡 It’s learning time!

In more positive news, if you want to not be the last person on earth not knowing what generative AI is all about, Google has your back. They just published a free learning path on Generative AI with 9 Courses:

Introduction to Generative AI

Introduction to Large Language Models

Introduction to Responsible AI

Introduction to Image Generation

Encoder-Decoder Architecture

Attention Mechanism

Transformer Models and BERT Model

Create Image Captioning Models

Introduction to Generative AI Studio

So, if you want to know more, jump in!

🇯🇵 Japan goes all in on AI

The Japanese government decided to not enforce copyrights on data used for AI training. A newly released policy in Japan allows AI to use any type of data, whether it is for non-profit or commercial purposes, regardless of the method of acquisition, including content from illegal sites.

Japan is opting for a no-copyright approach to remain competitive in the AI race is starting to heat up internationally.

And if you’re wondering why did I include this seemingly boring piece of news in this newsletter, it’s because it’s really funny. Why is it funny you ask? Well, there have been a lot of discussions regarding AI regulation and most of them focused on a "rogue nation" scenario, where a less developed country might disregard a global framework to gain an advantage.

Well, with Japan's new policy, the world's third-largest economy is going all in and will not impede AI research no matter what concerns about regulation the West may have.